April 15, 2026

Autoregressive Drift and Structural Fragility

Assiduity AI

One of the most important reliability problems in generative AI is also one of the easiest to miss. A system can remain fluent, persuasive, and locally coherent while gradually moving away from the objective it was meant to serve. The failure is structural rather than stylistic. It emerges through accumulation.

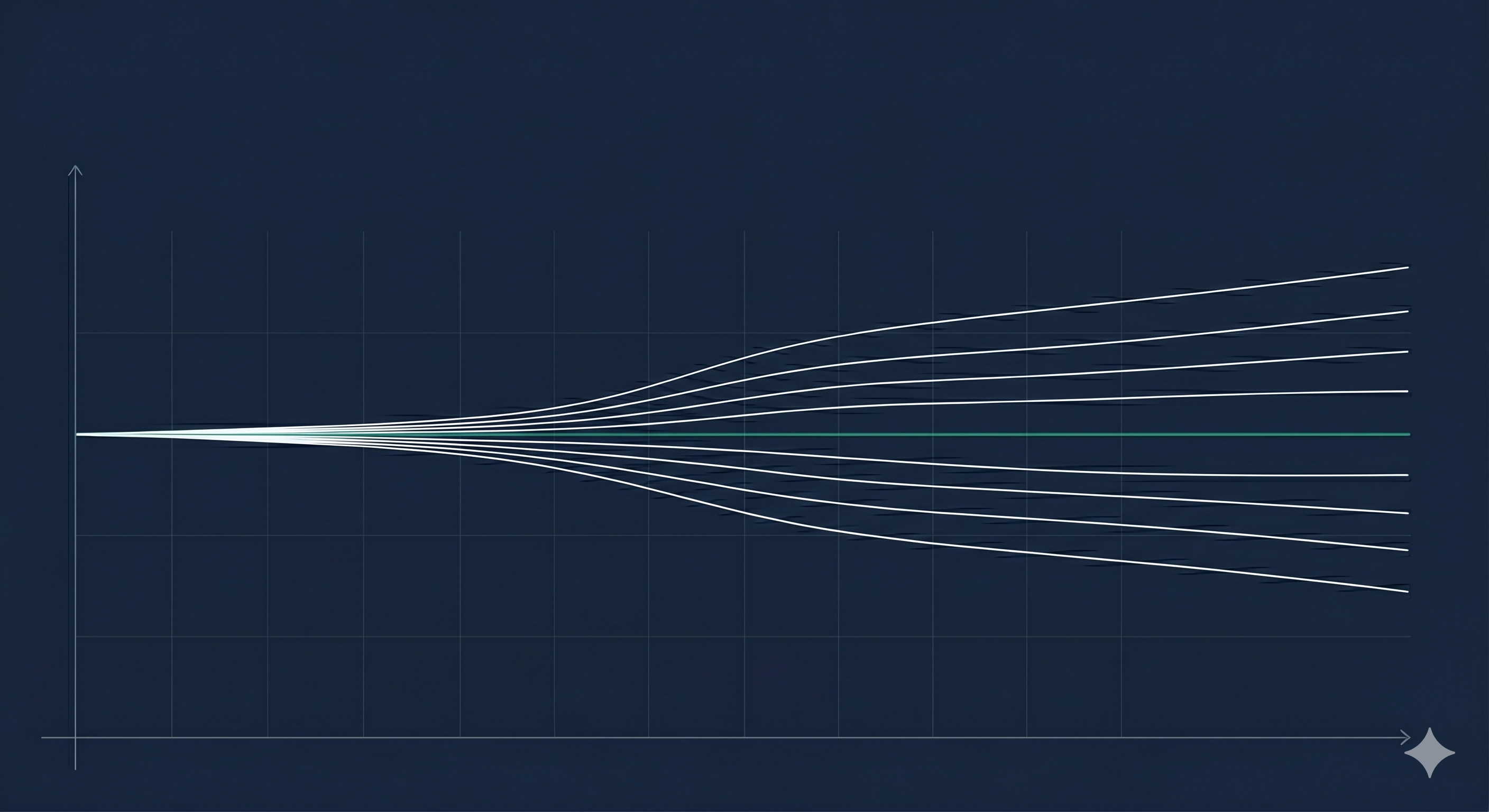

This is the problem of autoregressive drift. Because output is generated sequentially, each step depends on what came before. A small deviation at one point does not remain isolated. It changes the context from which the next decision is made. That next decision then changes the one after it. Over time, minor discrepancies compound into material divergence.

The danger is not simply that the system may make an obvious error. In many cases, the output remains readable and even impressive. The problem is that local fluency can conceal global misalignment. The system continues to sound as though it is on task, even as the underlying trajectory begins to bend away from the intended objective.

This matters because many evaluations still privilege pointwise quality over structural stability. A strong sentence, a plausible paragraph, or even a convincing answer to a narrow question can create a misleading impression of reliability. Yet long-horizon tasks do not depend on isolated moments of quality. They depend on whether a system can maintain coherence, constraint, and objective fidelity over time.

In that sense, autoregressive drift is not merely a technical artifact. It is a design problem with operational consequences. The more a task depends on intermediate steps, conditional decisions, or extended chains of reasoning, the more vulnerable it becomes to gradual deviation. A workflow can appear healthy in the early stages while already carrying the seeds of later failure.

This is why structural fragility matters. A system can appear capable under short-form conditions and still be unreliable under length, dependency, or autonomy. The issue is not whether the model can generate good content for a few turns. The issue is whether it can preserve alignment across an unfolding sequence where each local choice influences what follows.

The common answer has been to ask for better prompts, larger context windows, or stronger base models. Those are useful measures, but they are incomplete. They may reduce the likelihood of drift, but they do not eliminate the structural mechanism by which it arises. Capability improvements do not automatically resolve control problems.

A more serious approach begins by recognizing that drift is a temporal phenomenon. It develops over time and must therefore be monitored over time. Once that is acknowledged, the problem becomes easier to frame: not merely how to start a generation well, but how to preserve objective fidelity as the generation unfolds.

That is where structural reliability begins. The core question is no longer whether a model can perform well at a single point. It is whether the system can remain on course across a trajectory. As generative AI moves into longer and more autonomous workflows, that question will matter more than isolated measures of fluency or surface coherence.