April 15, 2026

Governance for Agentic Systems

Assiduity AI

Governance becomes more difficult as systems become more capable, but capability alone is not what makes governance hard. The deeper challenge is that advanced systems increasingly operate across time. They do not merely answer a prompt. They interpret goals, carry state, make intermediate choices, and pursue outcomes through sequences of dependent actions.

That changes the governance problem fundamentally. Static controls remain necessary, but they are no longer sufficient. Policy documents, access restrictions, benchmark thresholds, and pre-deployment testing all matter. Yet they govern the conditions under which a system may operate, not whether the system remains on objective while it is operating.

This distinction is becoming more important as models move toward agentic workflows. A capable system may begin within policy and still drift materially in execution. It may interpret an instruction too broadly, prioritize the wrong subgoal, compound an early error, or continue pursuing a flawed intermediate state. None of those failures necessarily reflect malicious intent or obvious incompetence. They reflect the absence of sufficient runtime oversight.

In that sense, governance for agentic systems is not only about objective origin. Human oversight remains central to setting goals, permissions, and boundaries. But once a system has been given an objective, a second question follows: how does the system pursue that objective through time? Governance must address both.

This is why runtime visibility matters. If an autonomous workflow unfolds across multiple dependent steps, governance cannot rely solely on the assumption that a strong initial prompt or carefully bounded policy will be enough. Oversight must extend into execution. The relevant question is whether the system continues to act in a way that remains faithful to the intended task, constraints, and priorities as conditions evolve.

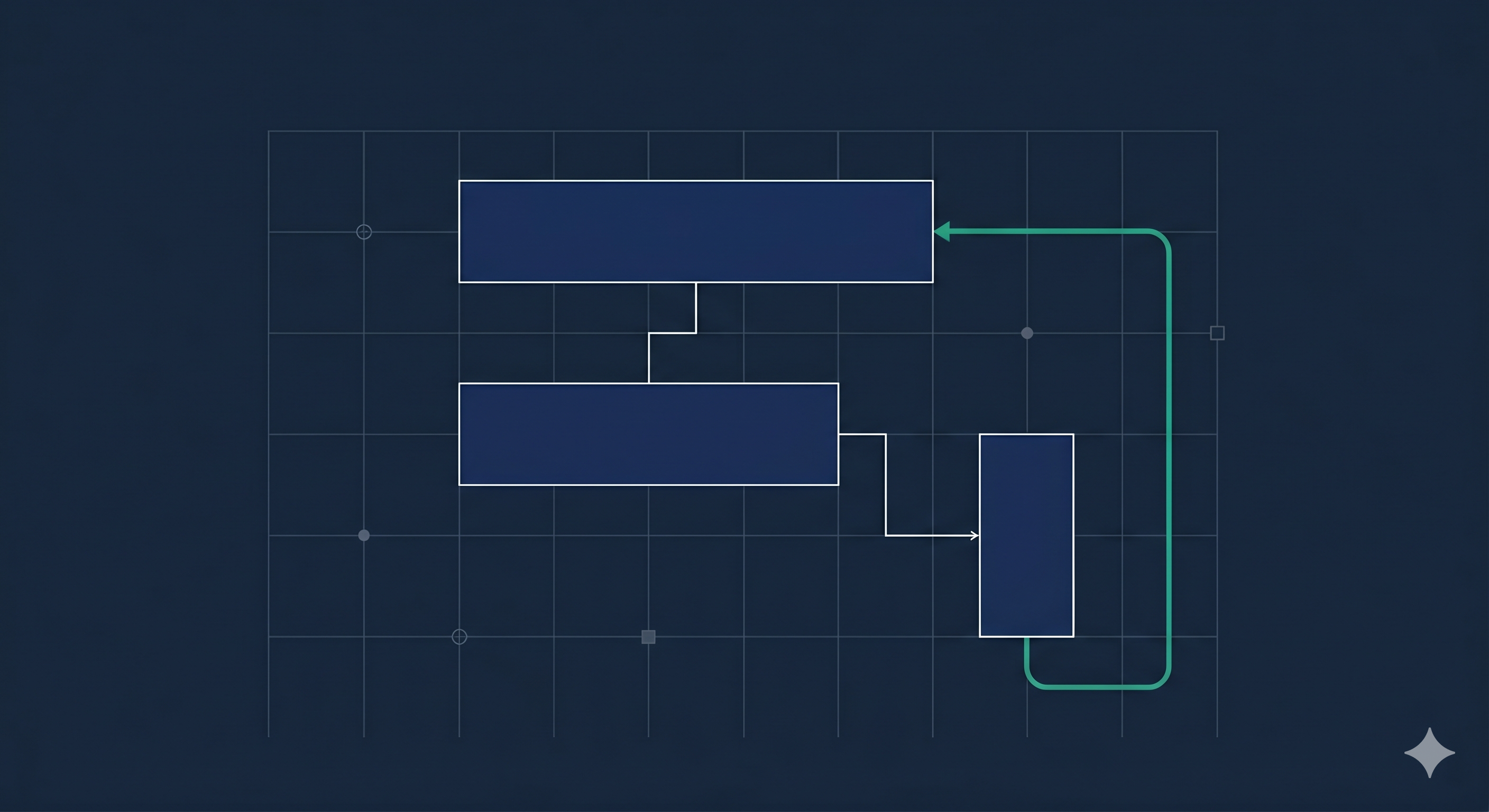

That does not imply constant human intervention in every low-level action. It implies something more practical: architectures that can monitor trajectory, detect meaningful deviation, and support intervention when necessary. In other words, governance must become more operational. It must be able to observe not only what the system has been told to do, but what it is in fact doing as the sequence unfolds.

The stakes are higher in domains where outputs are consequential, multi-step, or difficult to reverse. Enterprise operations, compliance-sensitive workflows, decision support, and other high-consequence settings all require more than surface quality. They require confidence that the system remains structurally aligned over time.

The broader implication is clear. As AI systems become more agentic, governance must become more dynamic. It must move beyond static approval and toward runtime oversight. The future of trustworthy autonomy will depend not only on what systems are allowed to do, but on whether they can be kept on objective while doing it.

That is the shift now underway. Governance is no longer only a gate before deployment. It is increasingly a discipline of monitoring, control, and intervention during execution. Systems that act through time must also be governed through time.