April 15, 2026

Runtime Control for Generative Systems

Assiduity AI

Generative systems fail gradually before they fail obviously.

The failure is rarely announced by a sudden collapse in fluency. More often, it appears as a slow accumulation of deviation: the system continues to produce language that is locally coherent, yet becomes progressively less faithful to the objective it was meant to pursue.

That distinction matters because advanced autonomy is not defined by whether a model can produce an impressive paragraph. It is defined by whether the system can remain aligned to intent across a sequence of dependent steps. In short outputs, local prediction may be enough to create the appearance of success. In longer workflows, it is not.

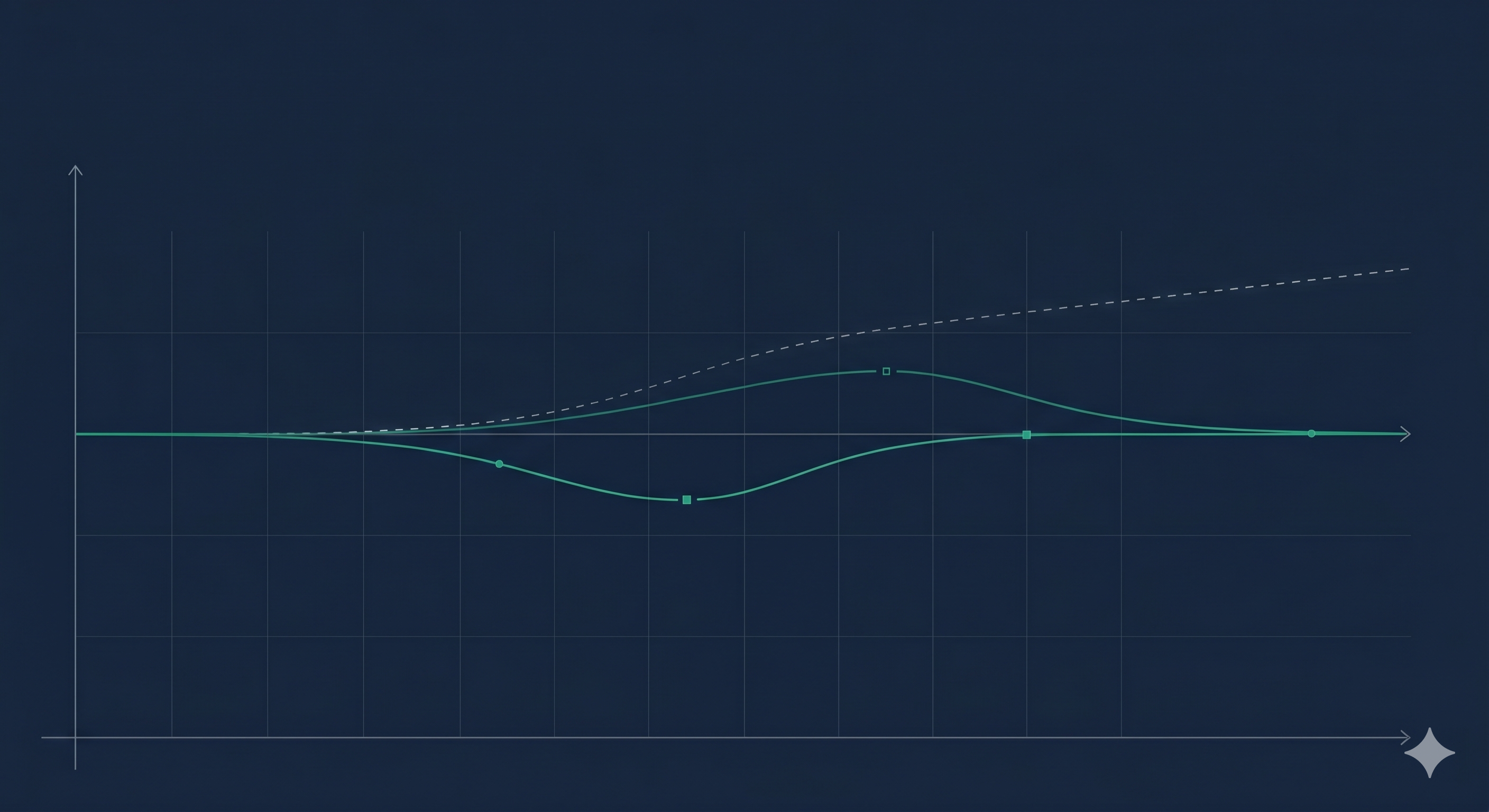

This is the structural problem of autoregressive drift. Each token conditions the next. Small deviations that appear harmless in isolation can compound over time into meaningful divergence from task, plan, or policy. The result is a system that sounds capable while moving quietly off course.

The conventional response has often been to improve prompts, enlarge context windows, or build stronger base models. Those measures matter. But they do not fully resolve the underlying control problem. Better capability is not the same thing as better trajectory discipline. A system may know more, reason more fluently, and still fail to remain anchored to the objective it was given.

Runtime control addresses that distinction directly. Rather than assuming the model will remain on course once prompted, a control layer evaluates whether the generation continues to track its intended objective as output unfolds. This changes the framing from static setup to dynamic oversight.

That shift becomes more important as model outputs become longer and more consequential. A short answer may tolerate small deviations. A multi-step autonomous workflow often will not. In these settings, reliability is not merely a matter of output quality. It is a matter of whether the system remains structurally aligned to objective over time.

This is why governance for advanced autonomy cannot end at model access controls, policy documents, or benchmark performance. Those are necessary, but they are not sufficient. Governance also requires visibility into execution. A system that begins well but drifts materially during generation still creates risk, even if its opening steps were strong.

The next phase of AI reliability will turn on this question. As systems move toward longer, more agentic workflows, the central issue is no longer only what the model knows. It is whether the system can remain on objective through time, under uncertainty, and across dependent steps.

Runtime control is one answer to that problem. It is not a cosmetic layer added after capability. It is part of the structural foundation required for advanced autonomy to be deployed with greater confidence in consequential settings.